Ensemble was founded nearly a decade ago on two beliefs: that proprietary data would reshape the most important companies of the future, and that America's physical economy needed to be reinvented. For a long time, those felt like separate convictions.

What's changed is that American reindustrialization, across defense, energy, housing, and manufacturing, has shifted from an outlier goal to something close to consensus. And as that ambition has grown, so have the stakes of how it gets done. The companies and policymakers driving this moment are beginning to understand that rebuilding America's physical economy on the old model isn't really reinvention.

We share in the excitement of the moment. But the more time we spend with founders building in the physical world, the more clearly we see that what separates the companies that will matter from those that won't isn't just producing better things, but building systems that learn.

Companies building physical products on this model consider AI not as a layer added on top of existing operations, but as a foundational design decision. We think it's the framework through which serious reindustrialization will actually be achieved, but it requires a disciplined and forward-looking implementation of AI.

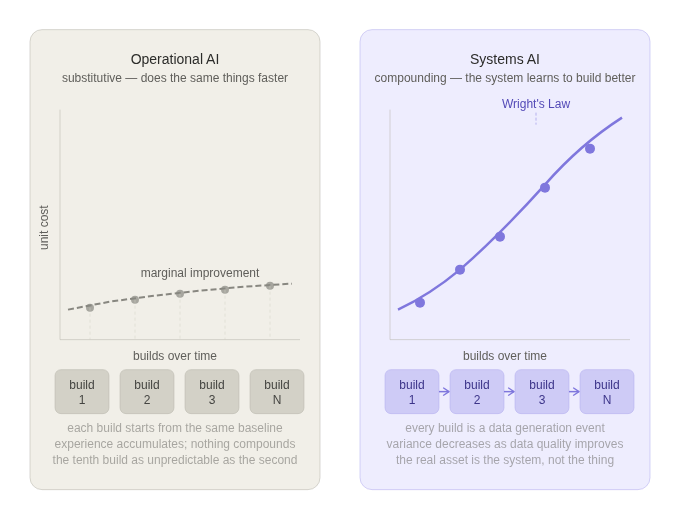

There are two ways AI creates value in physical companies. Only one compounds.

Companies building for the physical world usually want AI to do two different jobs.

The first is operational AI: quality control, predictive maintenance, anomaly detection, scheduling, workflow optimization, etc. It matters, it can create real value, and it's what most people picture when they hear "AI-powered manufacturing." But operational AI is fundamentally substitutive — it does what you were already doing, faster and cheaper. In most cases, it improves the operation at the margins more than it changes the core economics of the business.

The second is systems AI: the model that learns how to build the thing better, predicts how design changes affect production outcomes, and eventually helps drive autonomy in the system itself. That is where the moat forms.

Most physical AI companies miss this distinction.

They "apply AI" to their production data and wonder why the gains don't compound. That question is itself a signal: if you're discovering this after the fact, your process was probably never designed to generate the kind of data that compounds. AI can find patterns in chaos, but it cannot learn from a process with no consistent past.

The winners treat every build as a data generation event, feeding what they learn back into the next unit — improving efficiency and cutting costs with each iteration. The real asset isn't the thing they built; it's the system that built it and the data that system accumulates over time. Companies that understand this find that iteration-to-iteration variance decreases as data quality improves, accelerating learning and widening the moat with each cycle. The only path onto the compounding curve is through disciplined repetition (see Wright’s Law).

Why now

For most of industrial history, "closing the loop" required elapsed time. The loop here is a production cycle: design something, build it, observe how it performs, and feed that back into the next iteration. In practice, a full cycle took months or years at full scale; failures had to accumulate, variance had to become visible, and only then could the process be meaningfully updated. Incumbents won because they had more completed cycles behind them, and a late entrant couldn't compress the time it took to overcome a learning curve.

Two forces are rewriting that dynamic, and they're working together for the first time.

- The first force is the closing of the sim-to-real gap, historically one of the great hurdles to physical AI. While it would be wrong to say the gap between simulation-based training and real-world environments is disappearing uniformly, it is starting to close in specific, measurable ways that matter. Physics engines can now model fluid dynamics, material stress, and thermal behavior with a fidelity that was computationally impossible five years ago — a meaningful unlock for domains such as maritime, construction, aerospace, and energy.

And while the gap persists for complex physical interactions and high-dimensional sensory data, full closure isn't actually required to deliver value. What you need is a simulation good enough to rank a series of simulated experiments. Rather than running every experiment in the physical world, engineers can work from a data-driven comparison that surfaces the most promising configurations — reserving physical runs for the candidates most likely to advance the learning cycle, and discarding the rest in software.

- At the same time, AI is compressing the feedback path. The time between observing a variance and encoding its solution used to be measured in engineering cycles, weeks of analysis, design reviews, and change orders. That's now collapsing toward days.

Taken separately, each force would be significant. Taken together, they're changing the fundamental economics of the learning curve. Faster simulation means more laps before the factory exists. Faster feedback means more learning extracted from each lap. The loop isn't just running more quickly, but getting smarter faster than any prior generation of industrial technology allowed.

Until recently, the limiting factor was always time, not capital. A challenger could raise more money but couldn't buy elapsed production cycles. That constraint is eroding. What used to take a decade to become insurmountable now takes two or three years. The window is shorter than most founders think, and it's closing.

What we’ve seen work

The founders who recognize this trend are flipping the conventional production sequence. Instead of prototype → PMF → figure out manufacturing, they let manufacturing requirements shape the product from inception.

Saronic's head of manufacturing, recruited from SpaceX, was their third employee, hired before they had a prototype. The result: 80% hardware consistency across platforms, seven core modular components, a fully vertically integrated supply chain. CEO Dino Mavrookas’s line, "if we can't build thousands of autonomous surface vessels, we shouldn't build any at all," encapsulates that inversion. Design for its own sake is losing precedence; in the new paradigm, design should adapt to at-scale manufacturing needs from the outset.

In the same model, ICON built their manufacturing system – the Vulcan printer, the Magma mixing unit, BuildOS – before they had commercial scale. Each house printed by ICON’s robots informs how the very next house is printed; as builds accumulate, wall cost falls.

Both are in our portfolio, which means we've watched closely enough to know the thesis isn't a guarantee. Manufacturing-led design rewards founders who combine it with genuine judgment about timing, knowing which variance to absorb and which to turn away.

The project trap and why discipline alone won't save you

When each new build starts from the same baseline as the last, when the system fails to learn and costs don't decrease, you're in the project trap. The tenth build is just as unpredictable and unprofitable as the second. Experience accumulates but nothing compounds.

The trap is insidious because it feels like progress. Custom projects bring in revenue, please investors, and attract attention. Katerra raised nearly $3 billion on exactly the right pitch (factory-built components, vertical integration, costs driven down through scale), but fell victim to the project trap. They said yes to everything. The factory never repeated the same thing twice and never learned efficiently from those iterations. Six years later? Chapter 11.

Fisker, Proterra, Solyndra: different industries, same ending.

The incentives that sustain the trap feel reasonable at every step. That's what makes it hard to escape.

Most founders who end up in the trap aren't there because they never understood the compounding model — they understood it fine. They fail because the incentives inside a fast-growing hard tech company actively work against loop discipline. BD is compensated on revenue. Engineering is perpetually under-resourced. A customer is ready to buy now, and interest is hard to revive if delayed.

The solution has to be structural, not just cultural:

- BD comp tied to margin and repeatability, not just revenue closed.

- Engineering with real sign-off on commercial commitments.

- Capacity targets set by what the loop can absorb, not what the pipeline demands.

These are hard to implement in a revenue-starved early-stage company. But the alternative is an organization that understands the theory and violates it systematically anyway. We've seen that too, and it's harder to watch than the founders who never understood it at all.

A simple test

When demand doubles, does the company get better or just get busier?

Projects get busier. Products get better.

The founders who build lasting moats don't just understand the loop. They build organizations where the loop never stops running, where every exception gets interrogated, every variance absorbed, and the system comes out smarter on the other side.

We've built Ensemble the same way. A dedicated data science and engineering team has been half the firm since inception — applying the same systems AI logic to venture capital that we look for in the companies we back. The loop comes first. We design our process to learn from every iteration.

Some of our most important relationships started with tinkerers and napkin sketches. If you're still figuring out the shape of the thing but thinking about it the right way, we’d love to chat. We invest from pre-incorporation through Series A, and we're most useful earliest.

If any of this resonates, we want to meet you.

Dear VC Partner: Your Firm is Falling Critically Behind. And It's Probably Because of You

In this article, Dr. Gopinath Sundaramurthy argues that while venture capital firms are aggressively funding AI platforms, most have failed to adopt AI meaningfully within their own organizations. Drawing on Ensemble VC’s decade of experience building an AI-native investment platform, the paper explains why superficial AI usage—tools, pilots, and isolated workflows—does not translate into durable competitive advantage.

IPO Alert: Groww goes public!

Ensemble-backed Groww goes public on NSE-India at $8.9B valuation Groww, one of Ensemble VC’s earliest data-driven investments, completed a successful IPO on the NSE, marking a major milestone for India’s retail investing market. Backed at the Series A pre-revenue, Groww exemplifies Ensemble’s strategy in action — identifying world-class teams early through its proprietary Unity platform.

Collin West to headline AlphaCore Wealth Summit 2025 in La Jolla

We’re heading to La Jolla for the 2025 AlphaCore Wealth Advisory Summit!In one week, our team will be on the ground at the AlphaCore Wealth Summit, one of the industry’s most forward-looking gatherings for wealth managers, allocators, and innovators. This year’s theme — Back to the Future — sets the stage for a conversation about how artificial intelligence and emerging technologies are reshaping wealth creation and capital deployment. If you’ll be there, we’d love to connect in person.

Why Ensemble is Backing Stablecore’s Mission to Bring Community Banks into the Stablecoin Future

Ensemble is thrilled to announce our investment in Stablecore, the infrastructure platform enabling banks and credit unions to offer stablecoins, tokenized deposits, and other digital asset services.

Gopi's Manifesto

In this excerpt from Ensemble’s Mid-Year Investor Letter 2025, Gopi Sundaramurthy, Founder and Head of Data Science, outlines a new model for investment firms in the age of AI. As one of the preeminent data scientists in venture capital, Gopi’s perspective is informed by over a decade of building data models for investors.